Both types of meters have their advantages and disadvantages.

The analogue type are great to see how something is varying, so perfect if tuning something up where you want to see the max value, etc, also if there is something occasionally causing a drop or surge. By their nature (mostly, ignoring VTVMs, etc) then take a non-trivial current to operate, with better ones typically being 50uA full scale (or 20kohm/volt as usually indicated), this is bad if you don't want to load a circuit, but good if you want to check for a voltage source that is capable of delivering any non-trivial current.

Digital meters have the advantage of generally greater accuracy and many fancy features these days, but they are slow to react to voltage changes and often the controls and features are far from clear or obvious to use. The high impedance (typically 10M) is good for light loading of electronic circuits, but leads to the "phantom voltage" effect on cables near energised circuits appearing to have at significant voltage.

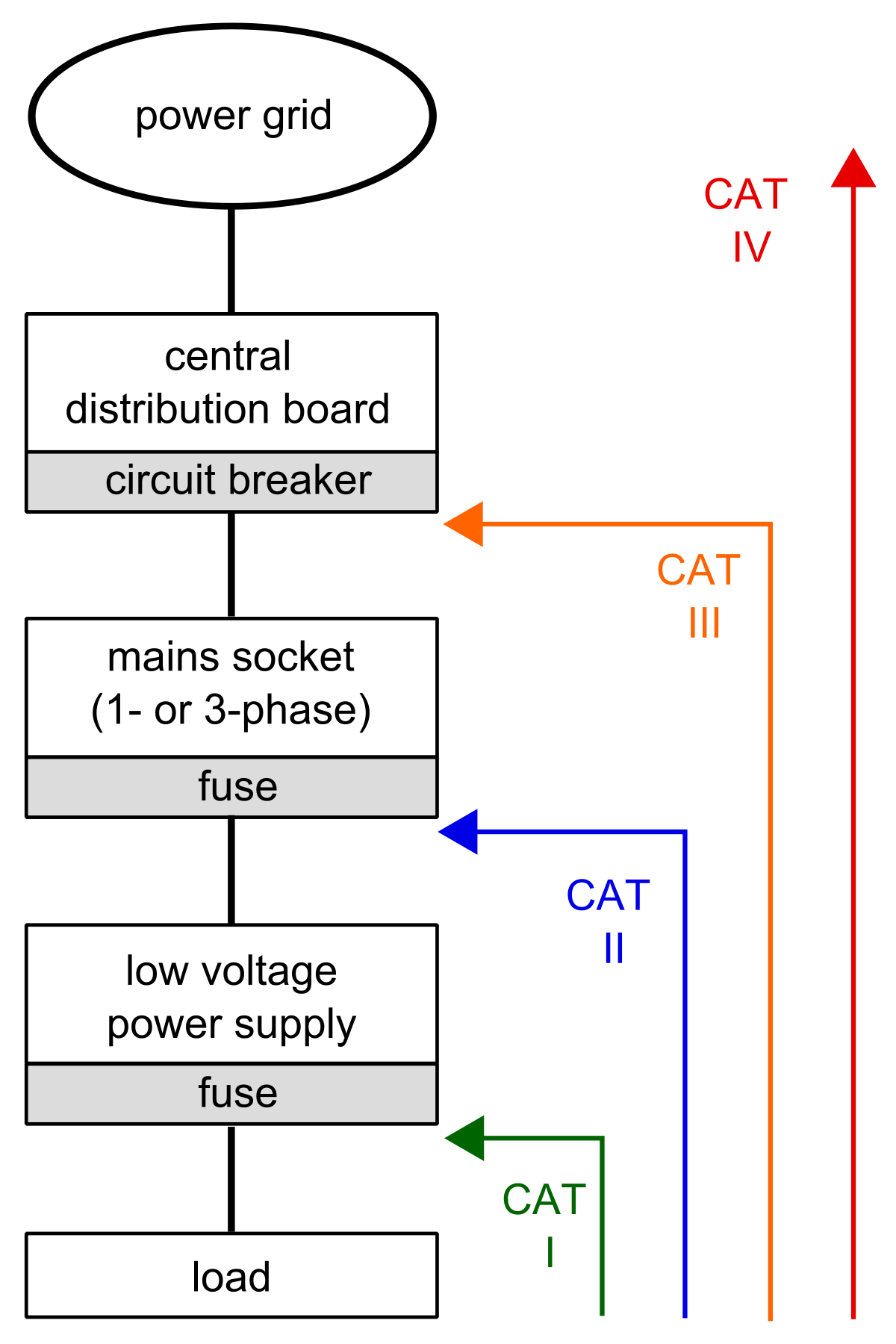

But if working on power systems as an electrician you absolutely must have at least CAT-III to 300V (domestic single phase work) and ideally higher rating such as CAT-IV to 600V.

A year or two ago I read a rather harrowing report on deaths in the USA electrical industry and there were a few cases of multimeters being a factor, either not CAT rated or in at least one case a good CAT-IV Fluke meter, but the guy was using it for continuity on a MV circuit that was accidentally energised to it usual 3.3kV (above its rating) and he died from the resulting burn injuries